Today, this logic is being tested anew as donors, technology philanthropists and International Non-Governmental Organisations (INGOs) increasingly encourage West African Civil Society Organisations (CSOs) to adopt artificial intelligence (AI) tools for community data collection, advocacy monitoring, grant reporting, and programme evaluation. But what worked for connectivity cannot simply be transplanted to organisational transformation.

This article argues that the leapfrog model is the wrong lens for AI adoption in civil society. Mobile money succeeded because it solved a problem of access. AI adoption, by contrast, requires robust internal structures before tools can deliver value or be deployed safely.

The Leapfrog Illusion in Civil Society Technology

The civil society sector in West Africa has experienced waves of technology-driven optimism before. The early 2010s brought a wave of information and communications technology for development (ICT4D) enthusiasm, promising that smartphones, open data, and social media would amplify CSOs’ efficiency. A decade on, many CSOs acquired digital tools but lacked the organisational culture, staff capacity, or data governance practices to use them strategically. Databases went unmaintained. Social media accounts were opened and abandoned. Monitoring and evaluation systems collected data that were never meaningfully analysed.

This pattern revealed that the failure of earlier technology waves was not primarily an issue of connectivity but an issue of readiness. The risk with AI is that the same pattern might repeat itself, but at a greater cost. AI tools trained on distorted data may reflect deep-seated social inequalities, gaps in data quality, or cultural assumptions that go unexamined without adequate organisational preparedness. Tools that process sensitive beneficiary data without governance frameworks expose organisations and beneficiaries to harm.

Donor-funded AI pilots with no continuity plan deepen technological dependency. The leapfrog trap, in this context, is that CSOs cannot move without the foundations that make movement meaningful.

What AI Readiness Requires: The ITHCE Framework

Global AI readiness frameworks, from Oxford Insights and the International Telecommunication Union (ITU), all assess readiness at the macro level. These frameworks, however, are designed for states and large institutions, not for the operational realities of a CSO running a women's rights programme in Tamale, an environmental monitoring initiative in the Niger Delta, or a civic education campaign in Conakry.

For CSOs, readiness is not about national fibre coverage or AI strategy. It is about whether the organisation has developed interlocking capacities that allow AI tools to be adopted responsibly and governed ethically.

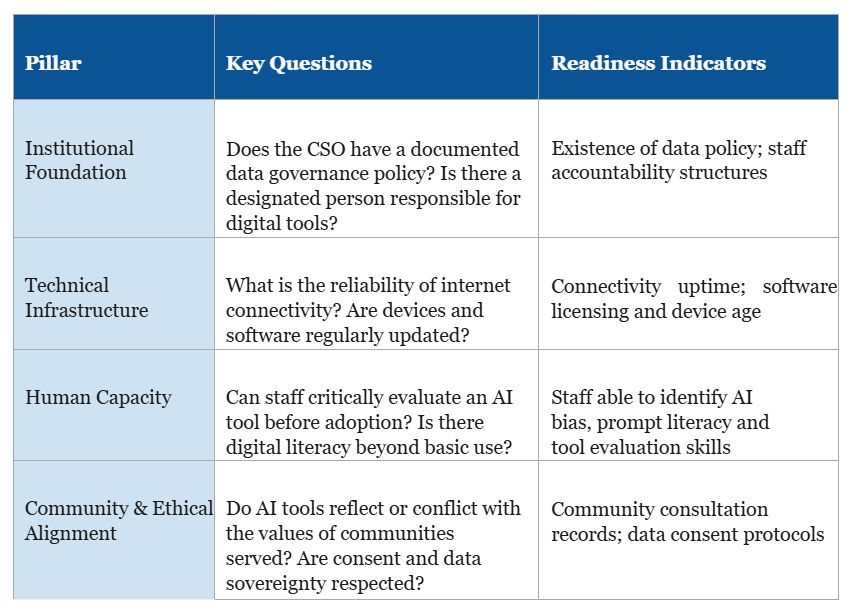

The ITHCE Framework responds to this gap as an original diagnostic and planning tool designed for CSO leaders and boards. Drawing on global digital maturity models and adapted for the political economy, resource constraints, and community accountability demands specific to West African civil society, the framework assesses organisational readiness across five pillars: Institutional Foundation, Technical Infrastructure, Human Capacity, Community and Ethical Alignment.

A CSO using the ITHCE Framework should resist the temptation to over-score itself on technical infrastructure simply because staff have smartphones and reliable internet connections. The framework asks deeper questions, such as: Can the organisation demonstrate that it has assessed what data an AI tool collects, stores and transmits? Does it have a defined policy for what happens to that data after a project ends? Has community consent been sought not just for data collection, but specifically for AI-mediated processing of that data?

In contexts where many West African CSOs operate under hostile legal environments, where state surveillance is a real risk and where community trust is the organisation's most valuable asset, these questions are critical.

Designing a Responsible AI Adoption Roadmap

Knowing that readiness cannot be leapfrogged is only half the challenge. CSOs must also know where to begin. The following five-stage roadmap offers a practical sequence for organisations.

1) Diagnose Before You Deploy: Conduct an honest readiness assessment using ITHCE to identify gaps that would make a specific adoption risky or ineffective.

2) Anchor AI to Organisational Purpose: A CSO should ask, what specific problem does this solve? Does this serve our constituency or our funder's reporting requirements? Purpose anchors responsible adoption

3) Build Capacity Before Deployment: The concept of deploy-then-train is dangerous with AI, where errors propagate silently at scale. Responsible adoption inverts this sequence. CSOs must invest in AI literacy before deployment, so that staff understand how AI generates outputs, can recognise its limitations, and are equipped to critically evaluate results before acting on them

4) Establish Governance Structures: Designate a responsible person to oversee AI use and maintain an AI register to track tools used, review data processed, access controls and design exit plans.

5) Evaluate, Iterate and Share: Share AI adoption lessons, positive and negative, through knowledge hubs and regional CSO networks. Collective learning is a form of capacity that no single organisation can generate alone.

Recommendations

CSO Leaders

Resist adopting AI tools before completing an honest internal readiness assessment.

Treat AI governance as a board-level responsibility, not a program management one.

Prioritise AI tools built on African data and with African community contexts in mind

Establish a clear data sovereignty policy specifying what community data can and cannot be used for, including AI-mediated processing

Donors and INGOs

Allocate dedicated funding to organisational readiness as a legitimate and necessary precursor to AI tool adoption.

Require AI governance plans as a standard component of grant proposals involving digital tools

Support the development of region-specific AI readiness assessment tools by African civil society researchers.

Create funding mechanisms that allow CSOs to sustain AI tool use beyond the project cycle, breaking cycles of technological dependency

Regional Governance Actors and ECOWAS

Develop a West Africa Civil Society AI Code of Practice providing minimum standards for data governance, community consent and responsible AI use

Support regional bodies to serve as AI readiness certification and peer learning hubs for CSOs across the region.

Engage with national AI policy processes to ensure that civil society data rights and digital sovereignty are explicitly protected in emerging regulatory frameworks

Conclusion

The mobile money revolution taught West Africa and the world that under the right conditions, communities and organisations can skip generations of technology to reach the frontier. But the lesson of mobile money is not that foundations do not matter, but rather that a foundation already existed.

AI adoption in West African civil society requires its own kind of foundation: organisational clarity, data governance discipline, human capacity, ethical accountability to communities and financial sustainability. These cannot be imported. They must be built from within, by organisations that understand their own context.

AI readiness must be continuously assessed, updated and embedded into how organisations plan, govern and evaluate their work. For West African CSOs, building that discipline is not a precondition for relevance; it is the very foundation of it.

The organisations that will benefit most from AI are not those that adopt it first. They are those who adopt it intentionally and with governance. Unlike mobile money, where leapfrogging worked because the foundation already existed, AI readiness must be built deliberately. In a region where civil society is the first and last line of accountability for millions of people, the stakes of getting this wrong are too high for shortcuts.

Your Feedback Matters

What did you think of this text? Take 30 seconds to share your feedback and help us create meaningful content for civil society!

Disclaimers

This piece of resources has been created as part of the AI for Social Change project within TechSoup's Digital Activism Program, with support from Google.org.

AI tools are evolving rapidly, and while we do our best to ensure the validity of the content we provide, sometimes some elements may no longer be up to date. If you notice that a piece of information is outdated, please let us know at content@techsoup.org.

"Why AI Readiness for West African Civil Society Cannot Be Leapfrogged", by Uchechi Joy Onyenwe, 2026, for Hive Mind is licensed under CC BY 4.0.

References

Abebe, R., Barocas, S., Kleinberg, J., Levy, K., Raghavan, M., & Robinson, D. G. (2020). Roles for computing in social change. Proceedings of the ACM FAT Conference, 252–260. https://doi.org/10.1145/3351095.3372871

Ada Lovelace Institute. (2021). Algorithmic impact assessment: A case study in healthcare. Ada Lovelace Institute. https://www.adalovelaceinstitute.org/report/algorithmic-impact-assessment-case-study-healthcare/

African Union. (2024). Continental Artificial Intelligence Strategy. African Union Commission. https://au.int/en/documents/20240101/continental-ai-strategy

Aker, J. C., & Mbiti, I. M. (2010). Mobile phones and economic development in Africa. Journal of Economic Perspectives, 24(3), 207–232. https://doi.org/10.1257/jep.24.3.207

Akpan-Obong, P. (2009). Information and communication technologies in Nigeria: Prospects, challenges, and lessons for Africa. Peter Lang Publishing.

Birhane, A. (2021). Algorithmic injustice: A relational ethics approach. Patterns, 2(2), 100205. https://doi.org/10.1016/j.patter.2021.100205

CIVICUS. (2023). State of Civil Society Report 2023. CIVICUS. https://www.civicus.org/index.php/state-of-civil-society-report-2023

Couldry, N., & Mejias, U. A. (2019). Data colonialism: Rethinking big data's relation to the contemporary subject. Television & New Media, 20(4), 336–349. https://doi.org/10.1177/1527476418796632

Davison, R., Vogel, D., Harris, R., & Jones, N. (2000). Technology leapfrogging in developing countries: An inevitable luxury? The Electronic Journal of Information Systems in Developing Countries, 1(1), 1–10. https://doi.org/10.1002/j.1681-4835.2000.tb00002.x

Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V., & Vayena, E. (2018). An ethical framework for a good AI society. Minds and Machines, 28(4), 689–707. https://doi.org/10.1007/s11023-018-9482-5

Gwagwa, A., Kazim, E., Hilliard, A., & Arabo, A. (2022). The role of the African value of Ubuntu in global AI inclusion discourse. Patterns, 3(4), 100462. https://doi.org/10.1016/j.patter.2022.100462

Heeks, R. (2008). ICT4D 2.0: The next phase of applying ICT for international development. Computer, 41(6), 26–33. https://doi.org/10.1109/MC.2008.192

International Telecommunication Union. (2021). Measuring the information society report. ITU Publications. https://www.itu.int/en/ITU-D/Statistics/Documents/facts/FactsFigures2021.pdf

Jack, W., & Suri, T. (2011). Mobile money: The economics of M-PESA (NBER Working Paper No. 16721). National Bureau of Economic Research. https://doi.org/10.3386/w16721

James, J. (2003). Bridging the global digital divide. Edward Elgar Publishing.

Jobin, A., Ienca, M., & Vayena, E. (2019). The global landscape of AI ethics guidelines. Nature Machine Intelligence, 1(9), 389–399. https://doi.org/10.1038/s42256-019-0088-2

Mohamed, S., Png, M.-T., & Isaac, W. (2020). Decolonial AI: Decolonial theory as sociotechnical foresight in artificial intelligence. Philosophy & Technology, 33, 659–684. https://doi.org/10.1007/s13347-020-00405-8

NTEN. (2019). Digital maturity model for nonprofits. Nonprofit Technology Network. https://www.nten.org/digital-maturity

Oxford Insights & IDRC. (2023). Government AI readiness index 2023. Oxford Insights. https://oxfordinsights.com/ai-readiness/ai-readiness-index/

Research ICT Africa. (2021). Artificial intelligence for Africa: An opportunity for growth, development and democratisation. Research ICT Africa. https://researchictafrica.net/publication/artificial-intelligence-for-africa/

Steinmueller, W. E. (2001). ICTs and the possibilities for leapfrogging by developing countries. International Labour Review, 140(2), 193–210. https://doi.org/10.1111/j.1564-913X.2001.tb00220.x

Toyama, K. (2015). Geek heresy: Rescuing social change from the cult of technology. PublicAffairs.

Unwin, T. (2009). ICT4D: Information and communication technology for development. Cambridge University Press