This is the second part of "The Responsible AI Roadmap for CSOs" guide. To learn more, read the article "From Tension to Principle: How CSOs Can Lead on Responsible AI", the introduction to the guide (with the publishing calendar for each of the parts), and Part 1: LOOK - Preparing the Ground.

In Part 2: CREATE, get ready to identify your organization's most significant principles, and learn how to define them.

2.1 The Foundational Value Finder Approach

This framework is adapted from Olivia Gambelin's book Responsible AI , which offers a practical method for organizations to identify values that align across regulatory, ecosystem, and organizational dimensions.

→ Use the Identify | Define AI Principles Worksheet to work through this section. The worksheet contains the alignment assessment table and all supporting references.

Setting the stage:

If you gathered tensions through pre-session interviews, begin by presenting the synthesized insights to the group. Share the patterns you heard: the hopes, fears, and non-negotiables that emerged. Allow time for the group to respond and add to what was captured.

If you're surfacing tensions live, start with the 5 Questions. Allow 5 minutes for individual reflection, then 15-20 minutes for group sharing. Capture everything on a flip chart or shared document.

With tensions surfaced, your team now has:

Insights from interviews or reflection (what you care about protecting)

An AI inventory showing what's in use and what concerns exist

Existing wisdom: your organization's values, relevant regulations, and benchmarks from the field

Now you bring this together to identify which principles matter most.

Building your principles list:

Start by compiling all the principles you've gathered through LOOK:

Common Responsible AI principles from frameworks and benchmarks research (the 6-12 most cited)

Your organization's existing values and principles

Any principles that emerged from your tensions conversations (for example, if "authenticity" came up repeatedly, that might point to a principle like "Human at the Core")

Principles implied by your regulatory landscape

You'll likely have 12-20 candidate principles. That's fine. The next step helps you prioritize.

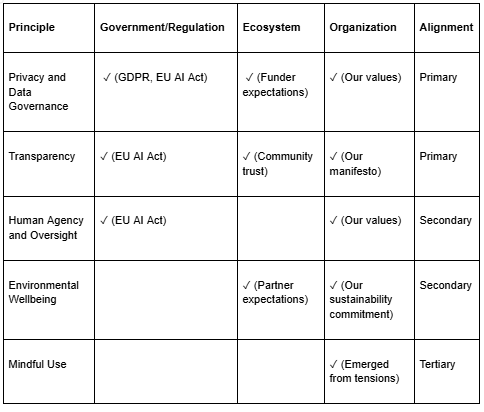

The alignment assessment:

For each principle, assess whether it's relevant across three dimensions:

(Exemplary draft)

→ The Identify | Define AI Principles Worksheet includes this table ready to fill in, along with the EU AI Act & Principles Alignment reference to help you complete the Government/Regulation column.

How the LOOK phase informs this:

Government/Regulation column: Your regulatory landscape research tells you which principles are legally required or encouraged.

Ecosystem column: Your understanding of funder, partner, and community expectations tells you which principles matter to your stakeholders.

Organization column: Your existing values AND your tensions insights tell you which principles are core to who you are.

The tensions conversation is particularly valuable here. If your team expressed strong concern about authenticity, that points you toward principles like "Human at the Core." If data privacy came up repeatedly in the inventory, that signals "Privacy and Data Governance" as primary.

Alignment scoring:

Primary (3 checks): Principle appears across all three sources. This is foundational, non-negotiable.

Secondary (2 checks): Principle appears in two sources. Important, supports decision-making when primary principles conflict.

Tertiary (1 check): Principle appears in one source only. May still be important to your organization specifically, even if not emphasized elsewhere

Aim for 3-7 primary principles. More than that becomes unwieldy; fewer may miss important dimensions. These primary principles become your focus for detailed definition.

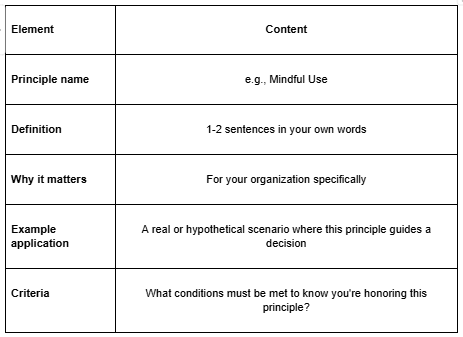

2.2 From Identification to Definition

Identifying principles is only half the work. A principle like "fairness" or "transparency" means different things to different organizations. Your task is to define what each principle means in your context.

→ The Identify | Define AI Principles Worksheet includes a Definition template for each principle. Use it to capture your team's responses to the questions below.

Defining questions:

For each principle you've identified as primary (and optionally secondary), work through these questions as a team:

What does this principle mean to our organization in the context of AI? How does it connect to our mission, values, and the specific work we do? What makes our interpretation unique?

Why is this principle important for us? What are the potential benefits if we uphold it? What are the risks or negative consequences if we don't?

Can you think of a specific example where this principle would be critically important or challenged? Ground it in your actual work; a real scenario or a realistic hypothetical.

What criteria would help us evaluate whether we're honoring this principle? What conditions must be met?

Case study: Khan Academy

Khan Academy adopted the tenet "Achieving Educational Goals", but they didn't stop at the name. They defined criteria that clarify what living up to this principle requires:

"There is a clear educational goal that can be achieved through the use of AI"

"There is a monitoring & evaluation plan to determine the impact"

"There are mechanisms in place to prevent non-educational uses of the AI"

Notice these aren't detailed implementation rules yet, they're principle-level criteria. They define what the principle demands without specifying exactly how to achieve it. That specificity comes later, when you develop policies (find out more here) and conduct risk assessments (find out more here).

The value of defining principles at this level: a team can now ask, "Does our proposed AI feature have a clear educational goal? Do we have a plan to measure impact? What mechanisms would prevent misuse?" The principle provides the questions; the policy and risk work provide the answers.

Case study: ChangemakerXchange

CXC defined "Mindful Use" with concrete questions their team asks before any AI use:

"Is AI really needed for this task?"

"Is it really aligned with our values?"

"What do I lose when I use this tool for this task?"

They also specified what this looks like in practice: "Some tasks call for our full presence and care, such as writing a heartfelt message to members, developing a theory of change, or designing a session for one of our gatherings. When such things are over-delegated to AI, they risk losing depth, authenticity or meaning."

The principle becomes actionable because it's defined in terms of their specific work.

Output:→ Use the Defining Responsible AI Principles sheet in the Identify | Define AI Principles Worksheet to capture this output for each principle.

These definitions become the foundation for your governance structures and policies.

2.3 The Importance of Diverse Voices

Who participates in this process matters as much as the process itself.

Why diversity matters:

People closest to your mission see risks that leadership misses

Frontline staff understand how AI actually affects daily work

Community members can speak to impacts that internal teams may overlook

Diverse perspectives prevent blind spots and groupthink

When people participate in creating principles, they become guardians of those values

Who should be in the room:

Leadership (for buy-in and authority to commit)

Program/operations staff (for practical reality)

Technical staff, if you have them (for feasibility insights)

Communications/external relations (for stakeholder perspective)

Administrative staff (often early AI adopters for efficiency tools)

If possible: community members, beneficiaries, or partners

CXC's Manifesto emerged from deep listening across their team. Khan Academy's framework is maintained by a cross-functional working group including product, data, and user research teams, ensuring diverse perspectives shape their approach.

Facilitation tips:

Create psychological safety: no judgment, no "wrong" answers

Use individual reflection before group discussion (prevents dominant voices from steering)

Explicitly invite quieter participants to share

Document dissenting views, not just consensus

Consider anonymous input mechanisms for sensitive concerns

Name that disagreement is information, not failure

Avoiding "corporate theater":

The goal is not to produce a polished document that leadership signs off on. The goal is genuine co-creation: principles that your team believes in because they helped shape them.

If your first session surfaces real disagreement, that's a feature, not a bug. Those tensions are information. They may indicate that you need more conversation before committing to a principle, or that a principle needs to be defined more carefully to accommodate different perspectives.

The process of creating principles is as important as the principles themselves.

Make sure to continue with Part 3: Putting It Into Practice, to learn how to identify AI principles that matter most for your CSO,Part 4: CREATE - Defining AI Governance Ownership for CSOs, to find out about difference ways of taking ownership of AI in your organisation, Part 5: How CSOs Can Create AI Policies That Actually Live, to read about the process of creating AI rules and Part 6: How CSOs Can Assess AI Risk Before Harm Happens, to know how to address the risks that come with AI.

Your Feedback Matters

What did you think of this text? Take 30 seconds to share your feedback and help us create meaningful content for civil society!

This article is the second part of the series, "The Responsible AI Roadmap for CSOs," developed as part of the AI for Social Change project within TechSoup’s Digital Activism Program, with support from Google.org.

AI tools are evolving rapidly, and while we do our best to ensure the validity of the content we provide, sometimes some elements may no longer be up to date. If you notice that a piece of information is outdated, please let us know at content@techsoup.org.

About the Author

Ayşegül Güzel is a Responsible AI Governance Architect who helps mission-driven organizations turn AI anxiety into trustworthy systems. Her career bridges executive social leadership, including founding Zumbara, the world's largest time bank network, with technical AI practice as a certified AI Auditor and former Data Scientist. She guides organizations through complete AI governance transformations and conducts technical AI audits. She teaches at ELISAVA and speaks internationally on human-centered approaches to technology. Learn more at https://aysegulguzel.info or subscribe to her newsletter AI of Your Choice at https://aysegulguzel.substack.com.