Before you dive into this part, make sure you've read through the article on How CSOs can lead on Responsible AI, the Introduction to this Practical Guide, Part 1: LOOK - Preparing the Ground, and Part 2: CREATE - Identifying and Defining Your Principles

Ready to begin? Here's how to structure your preparation and session.

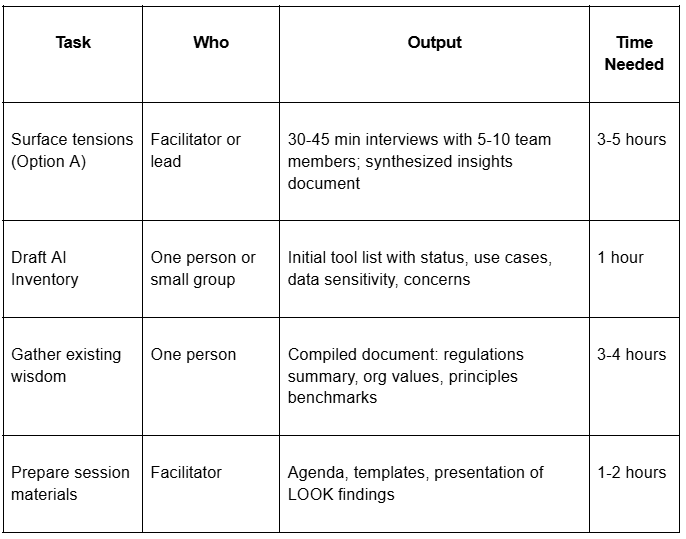

3.1 Before the Session: LOOK Phase Preparation

Timeline: 1-2 weeks before the CREATE session

This table outlines the tasks, expected outputs, required time, and the person responsible for each step to be completed before the CREATE session. You can view the full text from the table in the file provided here.

This table outlines the tasks, expected outputs, required time, and the person responsible for each step to be completed before the CREATE session. You can view the full text from the table in the file provided here.

If you don't have time for pre-work:

You can do everything in the session, but plan for 2-2.5 hours instead of 90 minutes, and accept that the inventory and wisdom-gathering will be less thorough. Consider a follow-up session to complete what you started.

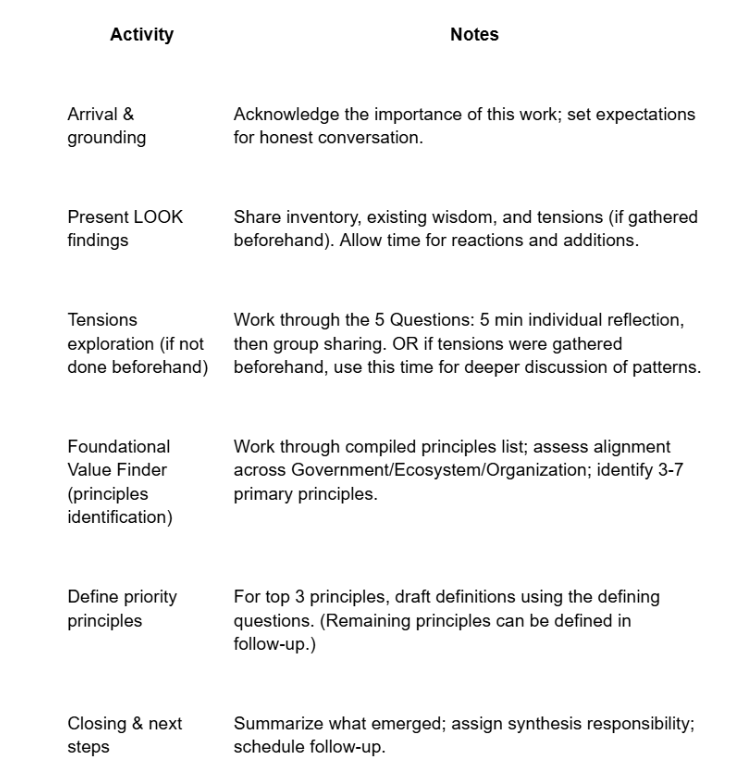

3.2 The CREATE Session: A 90-Minute Agenda

Participants: 6-12 people representing diverse roles and perspectives

Materials needed:

Flip chart or shared digital whiteboard

LOOK findings presentation (if gathered beforehand)

Principle identification and definition template (provided in the Resources)

List of common Responsible AI principles with brief definitions (provided in the Resources)

Agenda:

This table presents the proposed agenda for the CREATE session. You can view the full text from the table in the file provided here.

This table presents the proposed agenda for the CREATE session. You can view the full text from the table in the file provided here.

Expected outcomes:

Shared understanding of current AI use and tensions

Validated/expanded AI inventory

Prioritized list of 3-7 primary principles

Draft definitions for top 3 principles

Clarity on what needs further discussion

Momentum for the next session

3.3 After the Session

Within one week:

Synthesize and document. Write up what emerged: the tensions named, the principles identified, the draft definitions, the questions still open. Create a clean document that captures the team's work.

Check for accuracy. Share back to participants: "Did I capture this right? What's missing? What needs refinement?"

Identify gaps. Are there voices missing from the conversation? Perspectives not represented? Consider who else should be consulted before finalizing.

Within two weeks:

Complete definitions. For any principles not fully defined in the session, complete the definition work. This can be done by a small working group, then validated with the larger team.

Test against reality. Take a current AI decision your organization is facing. Ask: "Based on our draft principles, what should we do?" Does the principle provide useful guidance? If not, the definition may need refinement.

Ongoing:

Establish review rhythm. Commit to revisiting your principles at a regular interval. Six months is a good starting point. As CXC states: these are living documents that evolve as technology changes and understanding deepens.

Connect to governance. Once principles are defined, you're ready for the next phase: establishing who owns this conversation and how decisions will be made. See Article 2 in this series.

From Principles to Action - A Bridge

With your principles identified and defined, you've completed the foundation. But principles alone don't make decisions; they need to flow into structures, policies, and practices.

Principles Are Just the Beginning

Once you've identified and defined your principles, the next questions are: Who holds this conversation going forward? How do these principles become documented agreements? How do we assess risks and make decisions?

From principles to guardrails:

Khan Academy's journey illustrates the path. Their tenet "Achieving Educational Goals" became guardrail 1.4: "There are mechanisms in place to prevent non-educational uses of the AI." That guardrail then informed a risk assessment: the risk of "inappropriate or harmful uses" was rated high likelihood, high impact. Which led to specific mitigations: moderation systems, parent notifications, terms of service, account controls.

Principle → Guardrail → Risk Assessment → Mitigation

From manifesto to policy:

CXC's Mindful AI Manifesto (the "why") connects directly to their Mindful AI Policy (the "how"). The Manifesto principle of "Data Privacy & Trust" becomes operational in the Policy's "Red Light" rule: "Confidential or sensitive community/member data must not be input into public, third-party generative AI models."

Your principles will similarly flow into governance structures, policies, and risk assessment processes.

Continue the guide with Part 4: CREATE - Defining AI Governance Ownership for CSOs, to find out about difference ways of taking ownership of AI in your organisation, Part 5: How CSOs Can Create AI Policies That Actually Live to read about the process of creating AI rules and Part 6: How CSOs Can Assess AI Risk Before Harm Happens, to know how to address the risks that come with AI.

Your Feedback Matters

What did you think of this text? Take 30 seconds to share your feedback and help us create meaningful content for civil society!

This article is the third part of the series, "The Responsible AI Roadmap for CSOs," developed as part of the AI for Social Change project within TechSoup’s Digital Activism Program, with support from Google.org.

AI tools are evolving rapidly, and while we do our best to ensure the validity of the content we provide, sometimes some elements may no longer be up to date. If you notice that a piece of information is outdated, please let us know at content@techsoup.org.

About the Author

Ayşegül Güzel is a Responsible AI Governance Architect who helps mission-driven organizations turn AI anxiety into trustworthy systems. Her career bridges executive social leadership, including founding Zumbara, the world's largest time bank network, with technical AI practice as a certified AI Auditor and former Data Scientist. She guides organizations through complete AI governance transformations and conducts technical AI audits. She teaches at ELISAVA and speaks internationally on human-centered approaches to technology. Learn more at https://aysegulguzel.info or subscribe to her newsletter AI of Your Choice at https://aysegulguzel.substack.com.