Before you dive into this part, make sure you've read through the article on How CSOs can lead on Responsible AI, the Introduction to this Practical Guide, Part 1: LOOK - Preparing the Ground, Part 2: CREATE - Identifying and Defining Your Principles, Part 3: Putting It Into Practice, Part 4: CREATE - Defining AI Governance Ownership for CSOs and Part 5: How CSOs Can Create AI Policies That Actually Live

Two scenes, one competency.

A CSO program manager sits in front of a yellow light scenario from their newly written AI policy. They want to use an AI tool to help triage incoming requests from vulnerable beneficiaries. The policy says: pause and ask. But ask what, exactly? And ask whom?

Meanwhile, in Malaga, Spain, a woman named Lina went to the police to report threats from her ex-partner. Her case was registered in VioGén, Spain's algorithmic system for assessing gender violence risk. The algorithm asked 35 questions and classified her threat level as "medium." She requested a restraining order. It was denied. Three weeks later, Lina was dead, killed in a house fire allegedly set by the ex-partner who still had a key to her home

These two scenes share the same question: Who is looking carefully at what this algorithm does to people?

The yellow light scenarios from your AI policy are the starting point for risk assessment. The policy tells you when to pause. Risk assessment tells you how to look deeper. And here's what makes this competency uniquely powerful for civil society: the same detective skills you use to govern your own AI tools are the same skills you need to hold power accountable when AI systems are imposed on the communities you serve.

This is the BUILD phase of the journey, where everything you've created becomes operational. This article is about building both muscles.

Why AI Risk Is Different: The Sociotechnical Reality

AI doesn't operate in a vacuum. It operates inside messy, human, social systems. This makes it a sociotechnical technology, and that changes everything about how we assess risk.

Consider the Netherlands' System Risk Indication (SyRI), a welfare fraud detection algorithm that cross-referenced citizens' personal data across government databases. SyRI was deployed exclusively in low-income and immigrant neighborhoods. In February 2020, the District Court of The Hague ruled it violated Article 8 of the European Convention on Human Rights. The court found insufficient safeguards against discrimination. What made this ruling possible? A coalition of six human rights organizations and the Netherlands' largest trade union brought the case, supported by an analysis from the UN Special Rapporteur on extreme poverty. Civil society did the detective work that stopped a harmful system.

Or consider Jordan, where the Takaful cash transfer program, financed by the World Bank uses an algorithm with 57 socio-economic indicators to rank families' poverty levels and decide who receives support. A Human Rights Watch investigation documented how the algorithm embedded flawed assumptions about what poverty looks like. Families were penalized for owning cars they used to transport water, or for electricity consumption driven by poor insulation they couldn't afford to fix. One woman in one of Jordan's poorest villages told investigators: "The car destroyed us."

The key insight: harm is use-case specific. The same tool can be safe for one purpose and dangerous for another. There are no universal fixes, only context-specific questions, and the more CSOs understand about AI Risk Assessment, the more they can help.

What Risk Assessment Actually Is, And When You Need One

The field is full of intimidating acronyms; FRIA, AIA, ARIA. The names change, but the skeleton is the same. Risk assessment is being a detective for your AI system; asking curious and intelligent questions, documenting findings, and deciding what to do about them.

The CIDA framework; Context, Input, Decision, Action, gives your investigation structure. What is the social and political environment this algorithm operates in? What data goes in, and what biases might it carry? How are decisions made? What happens downstream? This lens works whether the algorithm is yours or belongs to a government agency making decisions about the people you serve.

To anticipate harms, you don't need to start from scratch. AI incident databases, like the AI Incident Database, the OECD AI Incidents Monitor, and the AI Risk Repository, document what has gone wrong in the real world. These are your case files before you begin your own investigation.

And if all of this sounds complicated, remember what I say in my workshops: if I explained risk management frameworks to my grandmother, she would say, "You are telling me as if you have discovered something new. This is how we humans have been operating for ages." Before you act, you think about who might be affected. You ask around. You consider the worst case. Risk assessment simply gives that instinct a structure.

When do you need one? It depends on your relationship with the AI system. If you're procuring a third-party tool, the most common CSO scenario, risk assessment is part of your due diligence. If you're already using AI tools without any assessment, probably the most honest starting point for many CSOs, a retrospective assessment is urgent. If AI systems are being used in your communities; welfare scoring, automated immigration decisions, you need risk assessment literacy to scrutinize and advocate. This is uniquely civil society work. And in the EU, the AI Act makes risk assessment mandatory for high-risk systems.

Two Approaches CSOs Can Use

These aren't competing methods but complementary lenses, each revealing different things.

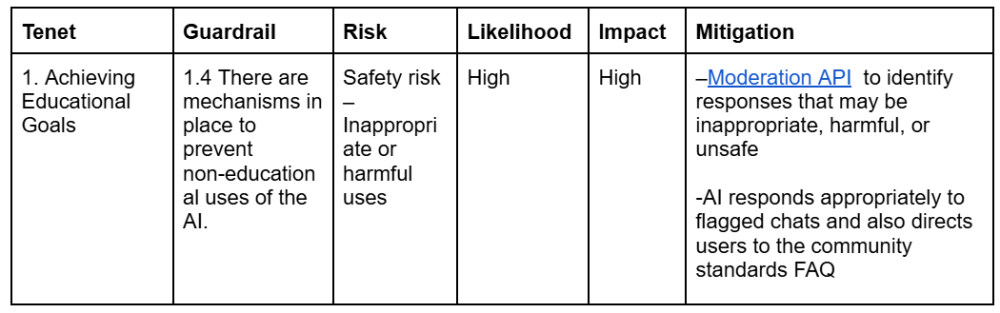

The Values-Focused Approach starts from your principles. Khan Academy demonstrated this when they adopted the nine tenets from the Ethical Framework for AI in Education, then created internal guardrails for their product teams. Their first tenet, Achieving Educational Goals, led to guardrail 1.4: "mechanisms in place to prevent non-educational uses of the AI." From there, they could rate the risk and build specific mitigations. Principle → Guardrail → Risk → Mitigation. Your CSO can follow the same path, and use the same lens to evaluate external systems.

Caption: Table 1. Example of the spreadsheet created and used by Khan Academy. Source: Khan Academy’s Framework for Responsible AI in Education, by Khan Academy, April 3, 2025, Khan Academy Blog. © 2025 Khan Academy. All rights reserved. Not covered by the CC BY 4.0 International license of this content piece. You can view the full text from the table in the file provided here.

Caption: Table 1. Example of the spreadsheet created and used by Khan Academy. Source: Khan Academy’s Framework for Responsible AI in Education, by Khan Academy, April 3, 2025, Khan Academy Blog. © 2025 Khan Academy. All rights reserved. Not covered by the CC BY 4.0 International license of this content piece. You can view the full text from the table in the file provided here.

The Stakeholder-Focused Approach starts from the people affected. When I led a session with around 70 leaders from 30 education NGOs, working with Tech To The Rescue, we examined the Wisconsin Dropout Early Warning System, an algorithm that used data including race and disciplinary records to predict which students might drop out. The participants took roles as Principal, Parent, Data Scientist, Superintendent, and Teacher. In 30 minutes, they produced a comprehensive roadmap for transforming the system.

For instance, when the "skeptical Parent" at the table asked why her child's race was being used to predict failure, the entire group shifted: the Data Scientist proposed removing race and ZIP codes as input features, the Teacher suggested replacing the stigmatizing "high-risk" label with actionable insights like "may benefit from extra support in algebra," and the Principal reframed the system's entire purpose from "predicting dropouts" to "identifying pathways to success." One role's question changed the whole design. Diverse perspectives don't just improve risk assessment, they transform it.

Both approaches converge on the same core formula: Likelihood × Magnitude = Risk Level. This isn't complex math. It's an honest, structured conversation.

A Risk Assessment in Practice

Let's make this concrete. A service delivery CSO is considering an AI chatbot for intake screening of people seeking legal aid. Walk through the CIDA lens:

Context: What's the purpose? Who decided to adopt this? What's the regulatory environment?

Input: What data does the chatbot use? What happens to people who can't navigate digital interfaces?

Decision: What does the chatbot recommend? Is there human review? What if it misclassifies someone's urgency?

Action: What happens downstream? Could someone be denied timely help?

Mapping these questions surfaces specific harms; opportunity loss, dignity loss, privacy violation, and your team scores and prioritizes them. Then you brainstorm mitigations: human oversight at critical decision points, a manual pathway for people the chatbot can't serve well, data anonymization protocols.

But here's the honest truth: you cannot eliminate all risk. After mitigation, some risk remains: residual risk, and it's a reality, not a failure. This is where AI governance controls step in: continuous monitoring, clear triggers for re-assessment. Your findings get documented in a risk register; a living record that becomes the Tree's working document and the evidence base for future policy updates.

The Living Cycle

Risk assessment is not a one-time document. It is a living process that connects everything in this series. Your findings feed back into your policy; yellow light scenarios become clearer, new red lines emerge, green lights are confirmed. New risks surface as AI operates in the real world, they get added to the register.

When this mindset becomes part of your culture, you move from reactive to proactive: Ethics by Design. And over time, risk-focused thinking evolves into impact-focused thinking, where your AI choices don't just prevent harm but actively create positive value for the communities you serve.

An Invitation

Your principles defined what you believe. Your Tree defined who holds the conversation. Your policy defined what you've agreed. Risk assessment defines what you're watching for, and it completes the cycle by feeding everything you learn back into the system.

Pick a yellow light. Gather your Tree. Ask the questions.

And remember: this competency doesn't stop at your own walls. In the Netherlands, a coalition of NGOs stopped a discriminatory welfare algorithm through the courts. In Spain, Eticas; a civil society organization, conducted an adversarial audit of VioGén after the Interior Ministry refused to allow an independent review. Working with the Ana Bella Foundation, publicly available data, and survivor interviews, they found that police accepted the algorithm's risk classification 95% of the time, effectively outsourcing life-or-death decisions to a formula. Between 2003 and 2021, 71 women who had previously reported abuse were killed while in the system, all classified at "negligible" or "medium" risk.

The same questions, the same frameworks, the same insistence on diverse voices; these are the tools civil society needs to hold powerful institutions accountable. The detective work is both inward and outward. That's what makes it uniquely yours.

→ The companion guide provides a step-by-step process and templates to conduct your first risk assessment as a team, whether you're assessing your own AI or examining a system that affects your community.

Your Feedback Matters

What did you think of this text? Take 30 seconds to share your feedback and help us create meaningful content for civil society!

This article is the sixth part of the series, "The Responsible AI Roadmap for CSOs," developed as part of the AI for Social Change project within TechSoup’s Digital Activism Program, with support from Google.org.

AI tools are evolving rapidly, and while we do our best to ensure the validity of the content we provide, sometimes some elements may no longer be up to date. If you notice that a piece of information is outdated, please let us know at content@techsoup.org.

About the Author

Ayşegül Güzel is a Responsible AI Governance Architect who helps mission-driven organizations turn AI anxiety into trustworthy systems. Her career bridges executive social leadership, including founding Zumbara, the world's largest time bank network, with technical AI practice as a certified AI Auditor and former Data Scientist. She guides organizations through complete AI governance transformations and conducts technical AI audits. She teaches at ELISAVA and speaks internationally on human-centered approaches to technology. Learn more at https://aysegulguzel.info or subscribe to her newsletter AI of Your Choice at https://aysegulguzel.substack.com.

About the Author

Ayşegül Güzel is a Responsible AI Governance Architect who helps mission-driven organizations turn AI anxiety into trustworthy systems. Her career bridges executive social leadership, including founding Zumbara, the world's largest time bank network, with technical AI practice as a certified AI Auditor and former Data Scientist. She guides organizations through complete AI governance transformations and conducts technical AI audits. She teaches at ELISAVA and speaks internationally on human-centered approaches to technology. Learn more at https://aysegulguzel.info or subscribe to her newsletter AI of Your Choice at https://aysegulguzel.substack.com.